4. Technical Foundations

Introduction

Now that we’ve explored how AI evolved into its current form, let’s lift the hood and examine the engine that powers large language models (LLMs). These systems are marvels of engineering, built on a foundation of interconnected components that work together to process and generate human-like text.

What will I get out of this?

By the end of this section, you will be able to:

- Explain the process of tokenization in LLMs, including how it transforms text into computational units and its impact on model performance and cost.

- Describe the role of context windows in LLMs, comparing their evolution from early 512-token models to modern systems with 200K-2M+ token capacities.

- Analyze how the Transformer architecture and attention mechanisms enable LLMs to process text more effectively than sequential models, including modern advances like Mixture-of-Experts (MoE).

- Differentiate between various types of “memory” implementations in LLMs, including conversation persistence, RAG, long-context approaches, and emerging memory layers.

- Evaluate the importance of quality training data in LLM development, considering factors such as diversity and accuracy.

- Identify the key components of LLM learning, including weights and biases, and explain their role in pattern recognition and text generation.

How Do LLMs Actually Produce Human-Like Text?

Large language models like GPT-4o, Claude, and Llama generate human-like text by leveraging advanced neural network architectures, vast training datasets, and probabilistic techniques. At their core, these models predict the next word (or token) in a sequence based on the context provided, producing coherent and contextually relevant text. Here’s a simplified breakdown of how this process works:

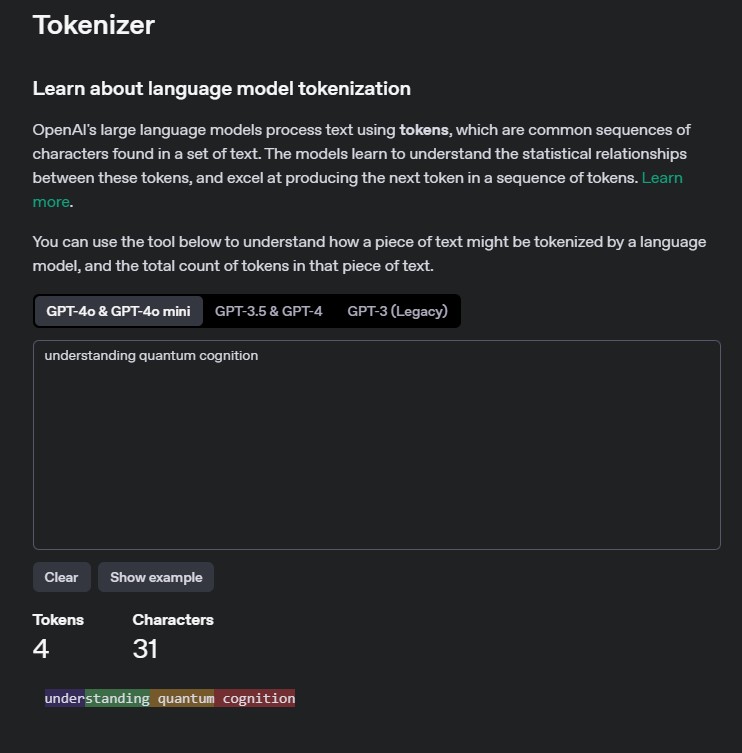

Tokenization

When we read a sentence, our minds don’t process it letter by letter. Instead, we recognize meaningful chunks – words, phrases, or even entire ideas. LLMs do something similar by breaking text into tokens. A token might be a single word, part of a word, or even a sequence of words depending on how common that sequence is in the model’s training data.

Concept: Tokens

Tokens are the fundamental units of text that LLMs process. They enable the model to break down language into manageable pieces for analysis and generation. Tokenization is the first step in transforming raw text into numerical representations for computation.

Remember: different tokenizers will split the text differently, and this can lead to different results!

For instance, the word ‘understanding’ might be split into ‘under’ and ‘standing,’ while other words such as ‘quantum’ or ‘cognition’ might be kept as a single token each. This varies from tokenizer to tokenizer. This flexibility allows LLMs to handle both familiar and unfamiliar text efficiently.

Contextual Understanding via Embeddings

Once tokenized, the input is transformed into embeddings – mathematical representations of tokens in a high-dimensional space.

These embeddings capture semantic relationships between words:

- Words with similar meanings (e.g., “cat” and “feline”) are placed closer together in this vector space.

- Positional encodings are added to these embeddings to help the model understand the order of words in a sentence.

Embeddings

We’ll revisit the topic of embeddings later in this chapter, but for now the important thing to remember is that embeddings form the foundation of transformer-based models like GPT, Claude, Llama, etc.

Think of embeddings as the “language” that AI models speak internally. Whether doing simple completion or complex retrieval tasks, the model is always working with embeddings under the hood.

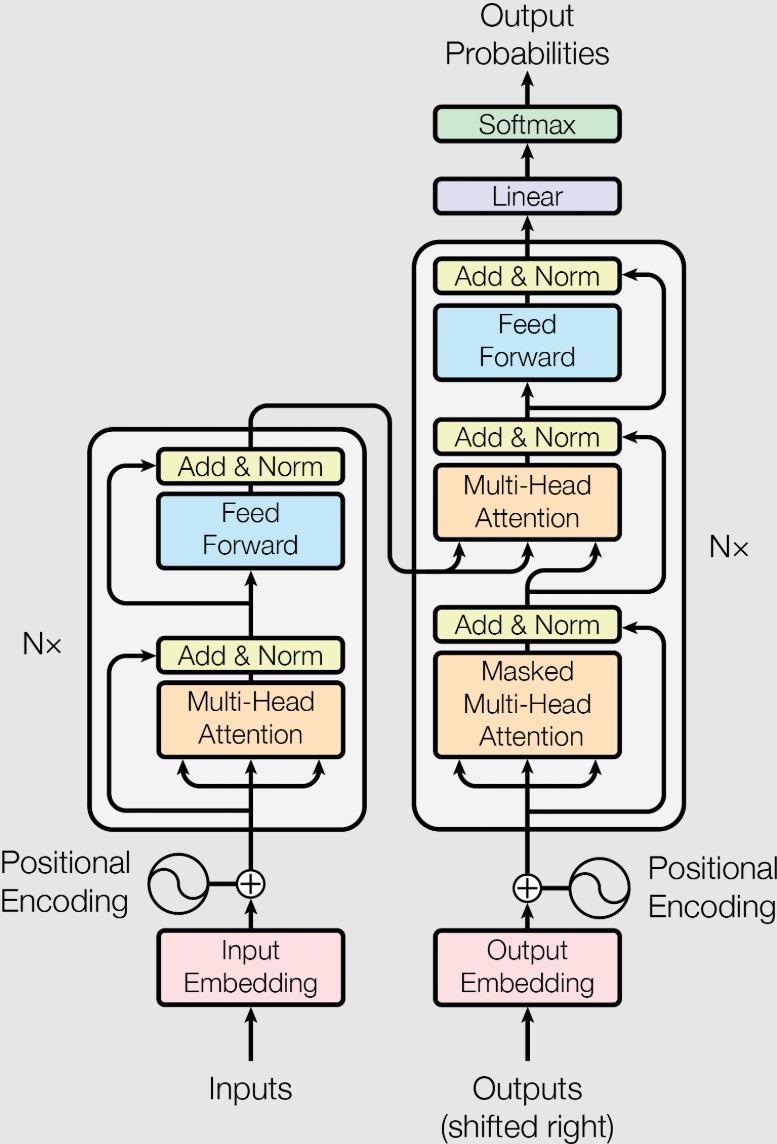

Transformer Architecture

Before 2017, AI models processed text sequentially – like reading a book one word at a time. This approach struggled with long-range dependencies in language (e.g., understanding how the start of a paragraph relates to its end). The Transformer architecture changed everything by enabling models to process entire sequences simultaneously. Introduced by Google’s researchers Vaswani et al. in 2017, it revolutionized the field of natural language processing.

Imagine analyzing a painting: instead of focusing on one corner at a time, you take in the whole image while zooming in on details that matter most. Transformers use this principle to understand context across an entire input sequence.

Attention Mechanisms

Here’s an example:

“The trophy didn’t fit in the suitcase because it was too big.”

What does “it” refer to – the trophy or the suitcase? Humans rely on context to resolve this ambiguity. Attention mechanisms give LLMs a similar ability by assigning importance (or “attention”) to different parts of an input sequence.

Concept: Attention Mechanisms

Attention mechanisms allow models to weigh the relevance of different tokens within an input sequence. This capability enables nuanced understanding by focusing on contextually important information.

Transformers take this further with multi-head attention, which lets them analyze multiple aspects of context simultaneously – like examining color, texture, and composition in a painting all at once.

Modern Architecture Advances

The base Transformer architecture has been significantly enhanced since 2017. Two key advances are particularly relevant in 2025/2026:

What it is: Instead of activating every parameter for every input, MoE models route each token to a subset of specialized “expert” sub-networks. A gating mechanism decides which experts handle each token.

Why it matters: MoE enables models with massive total parameter counts while keeping per-request compute manageable. DeepSeek V3 has 671 billion total parameters but activates only 37 billion per request – giving it the capability of a very large model at the cost of a much smaller one.

Examples: DeepSeek V3, Mixtral 8x7B, Qwen MoE variants.

What it is: Various techniques to make the attention mechanism more efficient, especially for very long sequences. These include Flash Attention, Grouped Query Attention (GQA), and Sliding Window Attention.

Why it matters: Standard attention has quadratic cost with sequence length – doubling the input quadruples the compute. Efficient attention variants make long-context models practical. This is how Gemini handles 2M+ token windows and Claude handles 200K tokens without astronomical costs.

Examples: Flash Attention 2 (used in most modern models), Ring Attention (for distributed long-context), Grouped Query Attention (in Llama 3.x, Mistral).

Autoregressive Text Generation

Autoregressive generation refers to a mechanism used by LLMs in which they predict the next token in a sequence based on the previous tokens. This process follows these steps:

- The model predicts the most likely next token based on the input and previously generated tokens.

- This predicted token is added to the sequence.

- The process repeats until a stopping criterion is met, such as reaching a maximum length or encountering an end-of-sequence (EOS) token.

Concept: EOS token

An end-of-sequence token is a special marker that signals to the model that it should stop generating further tokens. It acts as a boundary for text completion, ensuring that the output ends at an appropriate point.

Context Windows

A model’s context window represents its ability to “remember” and process information within a single interaction. For instance, a model with a 4,000-token context window can work with roughly 3,000 words of text at once.

Concept: Context Windows

Context windows define the maximum amount of input text an LLM can process at once, imposing practical limits on long-form tasks.

Modern models have pushed these boundaries dramatically:

| Era | Context Window | Approximate Words | Example |

|---|---|---|---|

| Early (2018-2020) | 512-2,048 tokens | 400-1,500 words | GPT-2 |

| Mid (2021-2023) | 4,096-32,768 tokens | 3,000-25,000 words | GPT-3.5, Claude 1 |

| Current (2024-2026) | 128K-200K tokens | 96,000-150,000 words | GPT-4o (128K), Claude Opus 4 (200K) |

| Extended (2025-2026) | 1M-2M+ tokens | 750,000-1,500,000+ words | Gemini 1.5 Pro (2M+) |

For perspective, each token represents approximately 4 characters or about 0.75 words in English. A 200K context window can process the equivalent of a 400-page book. Gemini’s 2M+ token window can handle multiple novels simultaneously.

Cost Implications

Context windows directly impact cost. On API usage from a provider, you pay for the number of tokens processed. Normally input tokens are cheaper, and output tokens are more expensive. A model that can handle 200K tokens doesn’t mean you should always send 200K tokens – good context management is both a performance and cost optimization.

Memory

Memory in LLMs refers to how these models maintain and manage information during and across conversations. However, it’s crucial to understand that this “memory” is fundamentally different from human memory.

LLMs are Stateless

LLMs are inherently stateless – each inference (generation of text) is completely independent. They have no built-in ability to remember previous interactions or maintain ongoing conversations. What we call “memory” in LLMs is actually implemented through external systems and careful management of inputs.

Approaches to Memory in 2025/2026

The way memory is implemented has evolved significantly. Here are the current approaches:

The simplest approach: include past messages in the prompt

This is what ChatGPT and similar chatbots do – they include the conversation history in each new request. As the conversation grows, older messages are summarized or dropped to fit the context window.

Advantages:

- Simple to implement

- No additional infrastructure needed

- Works with any model

Limitations:

- Bounded by context window size

- Increasing cost as conversation grows (more tokens per request)

- Older context may be lost in long conversations

Brute force: use a model with a massive context window

Models like Gemini (2M+ tokens) and Claude Opus 4 (200K tokens) can hold enormous amounts of information in a single interaction. Rather than implementing complex memory systems, you can load entire documents, codebases, or conversation histories.

Advantages:

- No retrieval infrastructure needed

- Model sees all context simultaneously

- Simpler architecture

Limitations:

- Cost scales linearly with context size

- Latency increases with context length

- Not all models support very long contexts

- “Lost in the middle” problem: models may struggle to attend to information in the middle of very long contexts

Smart retrieval: fetch only what’s relevant

Retrieval-Augmented Generation stores information externally (in vector databases or search indexes) and retrieves only the relevant pieces for each query. This approach is the foundation of most enterprise AI applications.

Advantages:

- Handles unlimited knowledge bases

- Cost-efficient (only relevant context is sent to the model)

- Can be updated in real-time without retraining

- Works with any model size

Limitations:

- Requires additional infrastructure (vector database, embedding pipeline)

- Retrieval quality determines answer quality

- May miss context that seems irrelevant but is actually important

We’ll dive deep into RAG in the Inference Techniques section.

Emerging: persistent memory across sessions

Newer systems implement persistent memory that carries across conversations. Examples include:

- ChatGPT Memory: OpenAI’s system that extracts and stores user preferences across sessions

- Claude Memory: Anthropic’s implementation of cross-conversation context

- Custom Memory Systems: Enterprise implementations that store user profiles, preferences, and interaction summaries in databases

How it works:

- After each interaction, important facts are extracted and stored

- Before each new interaction, relevant memories are retrieved

- These memories are injected into the prompt alongside the current query

Advantages:

- Persistent across sessions

- Personalized experiences

- Can grow indefinitely

Limitations:

- Privacy concerns (what is remembered and for how long?)

- Memory accuracy and relevance degrade over time

- Adds complexity to the system architecture

Types of Memory Content

Regardless of the implementation approach, memory content typically falls into three categories:

-

Semantic Memory (Facts & Knowledge):

- Stores specific facts and information

- Example: User preferences, biographical details, or domain-specific knowledge

- Implementation: Profile documents, knowledge bases, vector databases

-

Episodic Memory (Experiences & Interactions):

- Records specific interactions or conversations

- Example: Previous troubleshooting steps in a support conversation

- Implementation: Conversation history, interaction logs

-

Procedural Memory (Instructions & Rules):

- Defines how the model should behave

- Example: System prompts, formatting rules, response guidelines

- Implementation: System prompts, instruction fine-tuning

Context Management

Effective context management is crucial for maintaining coherent conversations and controlling costs:

- Keep track of token usage

- Use summarization for long conversations

- Prioritize recent and relevant information

Think about it this way…

Imagine trying to remember a phone number someone is telling you: Miller’s Law tells us that we can probably hold about 7 digits in our short-term memory easily. If they keep adding more numbers (like an extension, country code, etc.), you’ll start dropping the earlier digits unless you write them down or group them meaningfully. LLMs work similarly with their context window – they can only “remember” a certain amount of information at once.

Learning and Training

Understanding how LLMs manage context leads us to a deeper question: how do these models learn to understand and process information in the first place?

How Does the AI Learn These Relationships?

To understand how AI models learn, let’s use a simple analogy: imagine teaching a child to recognize animals.

Weights: Think of weights as the “strength” of connections in the AI’s “brain”:

- Just as a child learns that “having fur” is strongly connected to “being a mammal”

- The AI learns that certain patterns in text are strongly connected to certain meanings

- These connections are represented by numbers (weights) that the AI adjusts as it learns

Biases: These are like the AI’s default assumptions or starting points:

- They help the model make better predictions based on common patterns

- For example, after “The sun is…” the model might default to words like “bright” or “hot”

- However, biases can also reflect problematic assumptions from training data

Important Note About Bias

Just like humans can develop biases from their environment, AI models can learn biases from their training data. For example, they might associate certain jobs with specific genders or favor certain cultural perspectives. Addressing these biases is an important part of AI development.

The Importance of Quality Data

Just as a child learns better from good educational materials, AI models need high-quality training data to perform well. Here’s what makes data “good”:

-

Diversity: The data should include:

- Different writing styles (formal, casual, technical)

- Various topics and perspectives

- Multiple languages and dialects

-

Quality: Like choosing good textbooks for students:

- Accurate and reliable information

- Well-written and clear content

- Free from errors and inappropriate material

Looking Ahead

In later chapters, we’ll explore more deeply how the quality of training data affects not just model performance but also security. Poisoned training data is a real attack vector that can cause models to produce biased, incorrect, or harmful outputs – a topic we’ll cover in Chapter 2.

Key Takeaways

- Tokenization converts text into numerical units that LLMs process, and different tokenizers produce different results from the same input

- The Transformer architecture’s attention mechanism enables models to weigh the relevance of all tokens simultaneously rather than processing sequentially

- Context windows define how much information an LLM can process at once, ranging from early 512-token limits to modern 2M+ token capacities

- LLMs are inherently stateless – “memory” is implemented through external systems like conversation history, RAG, and persistent memory layers

- Training quality (data diversity, accuracy, and representation) directly shapes model capabilities, biases, and security properties

Test Your Knowledge

Ready to test your understanding of LLM technical foundations? Head to the quiz to check your knowledge.

Up next

Now that we understand how LLMs represent and process information, we’re ready to put this knowledge into practice. In the next section, we’ll dive into prompt engineering – the art and science of communicating effectively with AI models.